How to Improve Brand Visibility in AI Search Engines (2026 Guide)

TL;DR

- Transition from traditional SEO to Generative Engine Optimization (GEO) tactics.

- Shift focus from keyword ranking to building verifiable Entity Authority.

- Prioritize 'Share of Model' over traditional organic traffic metrics.

- Secure brand citations within AI-synthesized answers and knowledge graphs.

- Move beyond blue links to become a trusted node in LLM training data.

The Death of the Blue Link: Brand Visibility in 2026

The era of chasing "ten blue links" isn't just dying; it’s being buried.

If you believe Gartner, traditional search volume is set to plummet by 25% by 2026. Why? Because users are tired of doing the work. They are migrating to AI-powered answer engines that do the reading for them.

If your marketing strategy is still glued to keyword stuffing and begging for generic backlinks to pump up a vanity metric like Domain Authority (DA), you are optimizing for a ghost town. In this new landscape, you aren't fighting for a click. You're fighting for a citation.

Success is no longer about traffic stats in Google Analytics. It’s about "Share of Model"—the frequency with which your brand is synthesized into the direct answer provided by an LLM.

Here is the brutal truth: High Domain Authority does not guarantee you a seat at the table.

AI models like ChatGPT, Claude, and Perplexity do not care about your Moz score. They care about your Entity Authority. If the AI doesn’t understand your brand as a distinct, corroborated "Entity" within its massive knowledge graph, you are invisible.

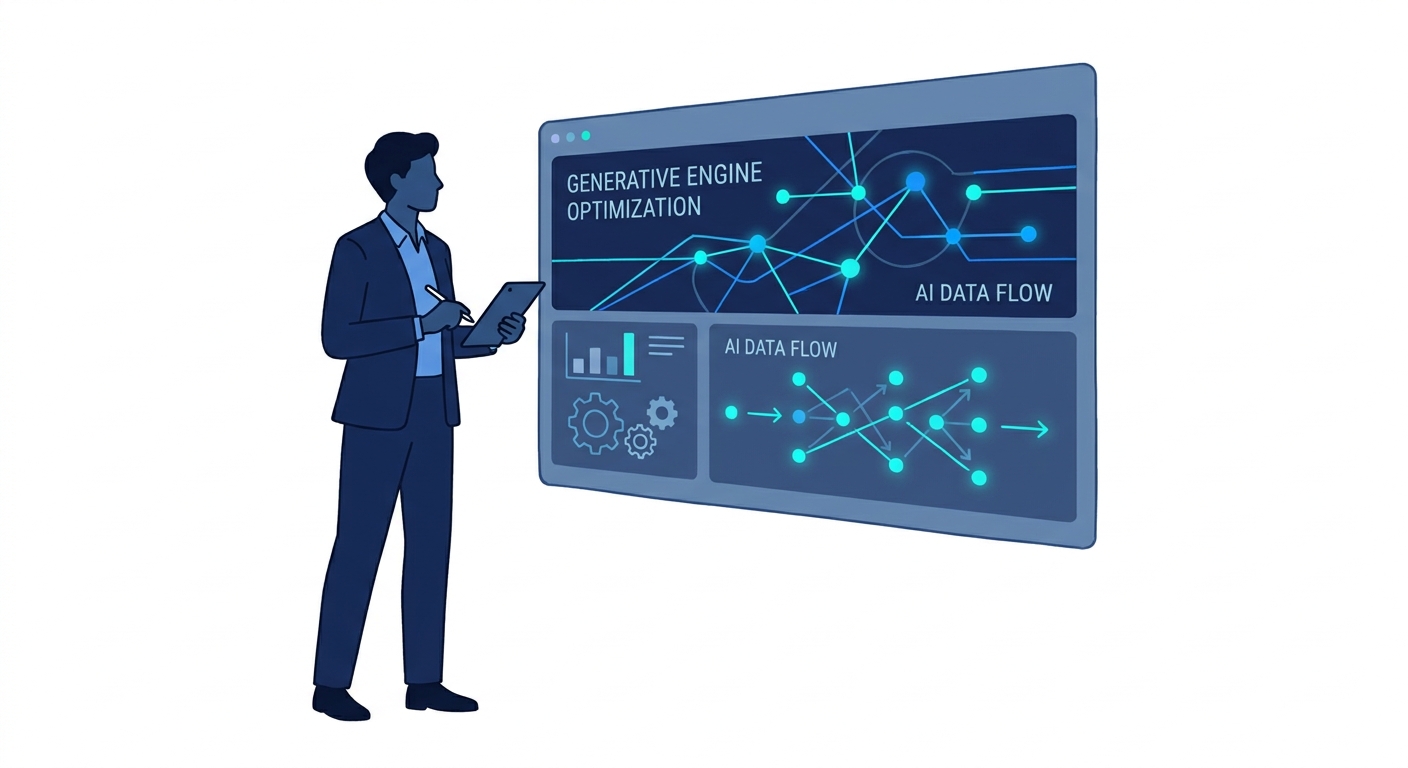

This isn't just a pivot; it's a complete rewrite of the rulebook. We are moving from Traditional SEO to Generative Engine Optimization (GEO). This guide is your survival manual for the transition from search results to synthesized answers.

The Paradigm Shift: From "Go Look" to "Here is the Answer"

To survive 2026, you have to understand the mechanics of the machine you're feeding.

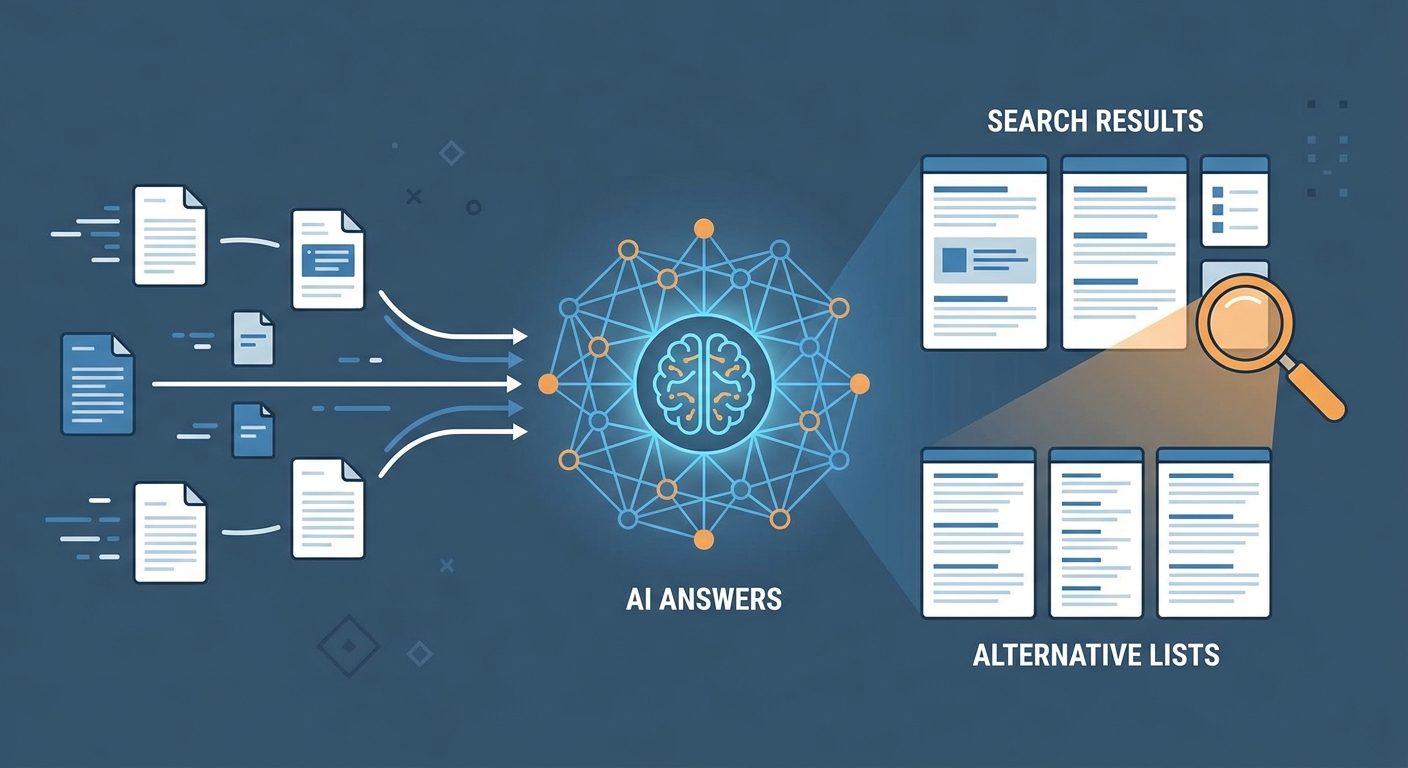

The Old Way (SEO): Think of Google’s algorithm as a librarian pointing to a shelf. You ask a question, and the librarian hands you a list of books (web pages) that might contain the answer. You, the user, have to open the books, read the pages, and synthesize the data.

The New Way (GEO): AI engines act as the expert consultant. They read the entire library before you even walk in. When you ask a question, they synthesize the information from multiple books and give you a direct, conversational answer. They don't just look for your page; they look for consensus about who you are.

This is the heartbeat of Generative Engine Optimization (GEO). As noted in recent industry analysis on mastering generative engine optimization in 2026, the goal is no longer to rank a URL. The goal is to embed your brand's facts into the weights of the Large Language Model (LLM).

If an AI cannot verify your brand's existence across multiple trusted nodes in its training data, it has two choices: hallucinate an answer (which it tries to avoid) or exclude you entirely to preserve "safety" metrics.

You are not fighting for a position on a page. You are fighting for a neuron in the model's brain.

Why "Share of Model" is the Only Market Share That Matters

We are living in a zero-click reality.

Users rarely leave the chat interface. They ask Perplexity, "What is the best enterprise CRM for fintech?" and the AI gives a synthesized paragraph. If your brand isn't mentioned in that paragraph, you technically didn't exist for that user journey.

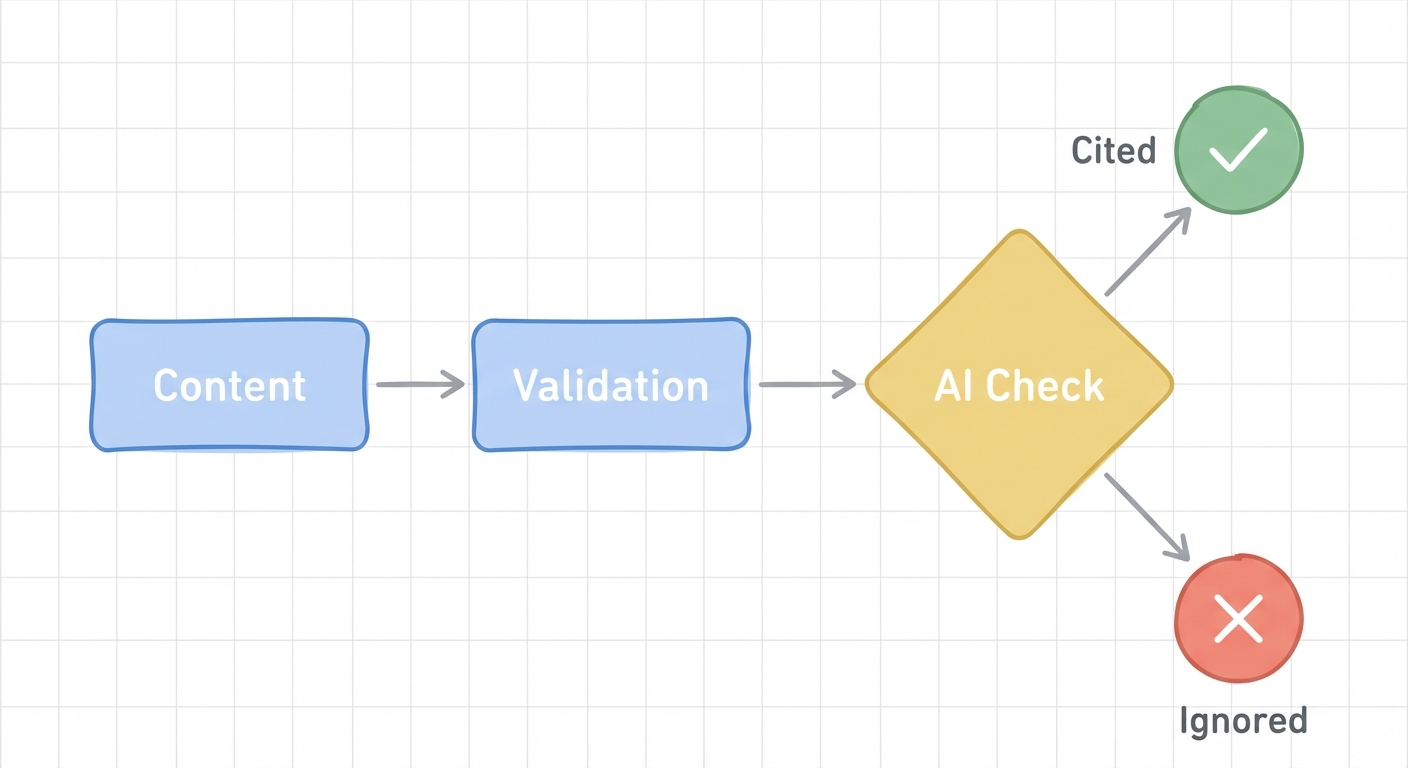

The "Confidence Score" Mechanism

LLMs operate on probability, not intuition. When an AI generates an answer, it assigns a "Confidence Score" to every fact and entity it considers citing.

- High Confidence: The fact appears in the brand's documentation AND is corroborated by trusted third-party sources (e.g., TechCrunch, G2, reputable industry blogs). Result: Citation.

- Low Confidence: The fact appears only on the brand's website or contradicts other data found elsewhere. Result: Exclusion.

This explains the "anomalies" we are seeing in recent case studies. For example, PivotM data on brand visibility highlighted a scenario where a DA 41 website outranked a DA 72 giant.

How?

The smaller site had a cleaner "Entity Definition." Its purpose, location, and offering were consistent across the web. The giant had conflicting data points—old addresses, mixed messaging on different platforms—that lowered the AI's confidence score. The AI trusted the smaller guy more.

The Core Strategy: Building Your "Entity Graph"

If you treat GEO like SEO, you will fail. You cannot "backlink" your way into an LLM's favor. You must build an Entity Graph that forces the AI to recognize you.

Kill Your Keywords, Build Your Entity

Stop obsessing over long-tail keywords like "cheap marketing software 2026." The AI understands semantics. It knows what "cheap" means without you typing it 50 times.

Focus on defining who you are.

Your "About Us" page, LinkedIn profile, Crunchbase entry, and G2 profile are no longer just for humans—they are the primary validation tokens for AI. If your About page says you are a "SaaS platform" but your LinkedIn says "Consultancy," the AI spots a hallucination risk. It downgrades your entity authority.

Consistency is the new keyword density.

The Power of Co-Citations

In the world of GEO, a mention is often more valuable than a link.

AI models are trained on massive datasets where they learn associations (vector embeddings). If your brand name appears in "Top 10" lists alongside industry leaders like Salesforce or HubSpot, the AI learns to associate your vector embedding with those high-authority entities. You gain authority by proximity.

This is why Digital PR is shifting. Getting a "nofollow" link from a major publication used to be annoying for SEOs. For GEOs, it's gold. It provides the training data necessary to validate your existence.

Technical Handshaking: Speaking the AI's Language

Content is king, but you still need to invite the robots inside. We call this "Technical Handshaking"—explicitly formatting your site so AI crawlers can ingest your data without friction.

Implementing llms.txt

Remember robots.txt? It told search engines where to go.

Meet llms.txt.

This is the proposed standard for the AI era. It is a text file placed in your root directory that explicitly tells AI agents (like OpenAI's bot or Anthropic's crawler) which pages contain your core training data.

Why you need it: AI crawlers are expensive to run. If they have to guess where your high-value content is, they might skip it. An llms.txt file serves your white papers, documentation, and core product pages on a silver platter. It ensures they are prioritized during the scraping and training process.

Structured Data for the Machine

Schema markup is no longer just for rich snippets in Google; it is for defining relationships.

You must use "SameAs" schema to link your website to your social profiles, Wikipedia page, and knowledge panels. This connects the dots for the bot, confirming that the "Acme Corp" on Twitter is the same entity as the "Acme Corp" on this website.

For a deeper dive on structuring your HTML headers and schema for maximum machine readability, refer to our guide on the anatomy of a blog post that ranks well.

Content Optimization: Writing for the "Citation"

AI models favor content that is easy to summarize and extract. If your answer is buried in paragraph four behind a wall of fluff, you will be ignored.

The Direct Answer Format

You must adopt the "TL;DR" method. Immediately following an H2 header (which should be a question or clear topic), provide the answer in a direct, bolded sentence.

- The Formula: Question (H2) -> Direct Answer (Bolded) -> Nuance/Data (Paragraphs).

This structure feeds the AI exactly what it needs to generate a snippet. It allows the model to grab the "truth" and then process the supporting details.

Conversational Patterns & E-E-A-T

The days of robotic, keyword-stuffed prose are over. AI is trained to prefer natural, human-sounding dialogue. It prioritizes content that demonstrates E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness).

According to best practices for enhancing AI visibility, shifting your tone from "marketing copy" to "expert interview" increases the likelihood of citation.

Use "I" and "we." Share specific anecdotes. The more your content sounds like a human expert, the more the AI weights it as a high-quality source.

The Timeline Reality: The 6-12 Month Lag

Here is the hardest pill to swallow: GEO is slow.

In traditional SEO, you could publish a post and see it rank in a few weeks (or days, if you were lucky). In GEO, you are subject to the LLM Training Cycle.

- Crawling: The bot scrapes your content.

- Processing: The data is cleaned and tokenized.

- Training/Fine-Tuning: The model is updated with new weights (this happens infrequently for major models).

This process creates a lag of 6 to 12 months. You are planting seeds today for the harvest next year.

However, this lag is also a moat. Once your brand is embedded in the model's weights—once you are part of its "long-term memory"—it is incredibly difficult for a competitor to displace you.

For more on maintaining a long-term view during this transition, read about building a sustainable SEO strategy, which emphasizes the patience required for genuine authority building.

Measuring Success in a Zero-Click World

If traffic drops, how do you know it's working?

You must stop looking at Google Analytics for validation. Start tracking Share of Model.

Currently, this involves manual auditing or using emerging AI visibility tools.

- The Test: Ask ChatGPT, Perplexity, and Gemini questions related to your product category.

- The Metric: How often is your brand mentioned? Is the sentiment positive? Are you in the top 3 recommendations?

While your traditional sessions might dip, your high-intent "brand search" volume should rise. Users will discover you via AI and then come to your site to convert. To help scale the production of the structured, citation-ready content required for this new landscape, consider leveraging the best AI blog generators that are designed to output clean, machine-readable formats.

Frequently Asked Questions

How is GEO different from SEO in 2026?

SEO focuses on ranking documents (blue links) using keywords and backlinks. GEO (Generative Engine Optimization) focuses on optimizing content to be synthesized into a direct answer by AI. It relies on entity authority, data corroboration, and confidence scores.

Why isn't my high-authority website showing up in AI answers?

You likely lack "Entity Definition." Even with high Domain Authority, if the AI's training data cannot corroborate your facts across multiple trusted third-party sources (like Crunchbase, LinkedIn, or industry news), it assigns a low confidence score. It excludes you to avoid hallucinations.

What is llms.txt and do I need it?

llms.txt is a standard file (similar to robots.txt) that gives AI web crawlers specific instructions on how to read and index your content. In 2026, it is a critical "technical handshake" that explicitly invites AI models to prioritize your best content for their training data.

How long does it take to improve AI search visibility?

Unlike traditional SEO which can show results in 90 days, GEO typically requires 6-12 months. This is due to the lag time in "LLM Training Cycles"—the time it takes for models to ingest new data, verify it, process it, and update their internal weights.

Can I track my brand's visibility in ChatGPT or Perplexity?

Yes, but not through Google Analytics. You must track "Share of Model"—the frequency with which your brand is cited in AI responses for your target queries. New SaaS tools are emerging specifically to audit these citations and sentiment.